In the dynamic world of product development, there’s a phase almost every creation passes through before it reaches the public eye: beta testing. More specifically, closed beta. It’s that exclusive stage where a carefully selected group of users access the product in its near‑final state. These users are expected to simulate real use and deliver insights that improve the product. But does closed beta feedback genuinely reflect how real users will interact with your product once it launches? Can you trust it to reveal authentic usage patterns and meaningful insights? This question lies at the heart of modern product strategy, and in this article, we’ll unpack it thoroughly — with rigor, clarity, and a bit of narrative flair.

I. What Is Closed Beta Testing? (A Foundation)

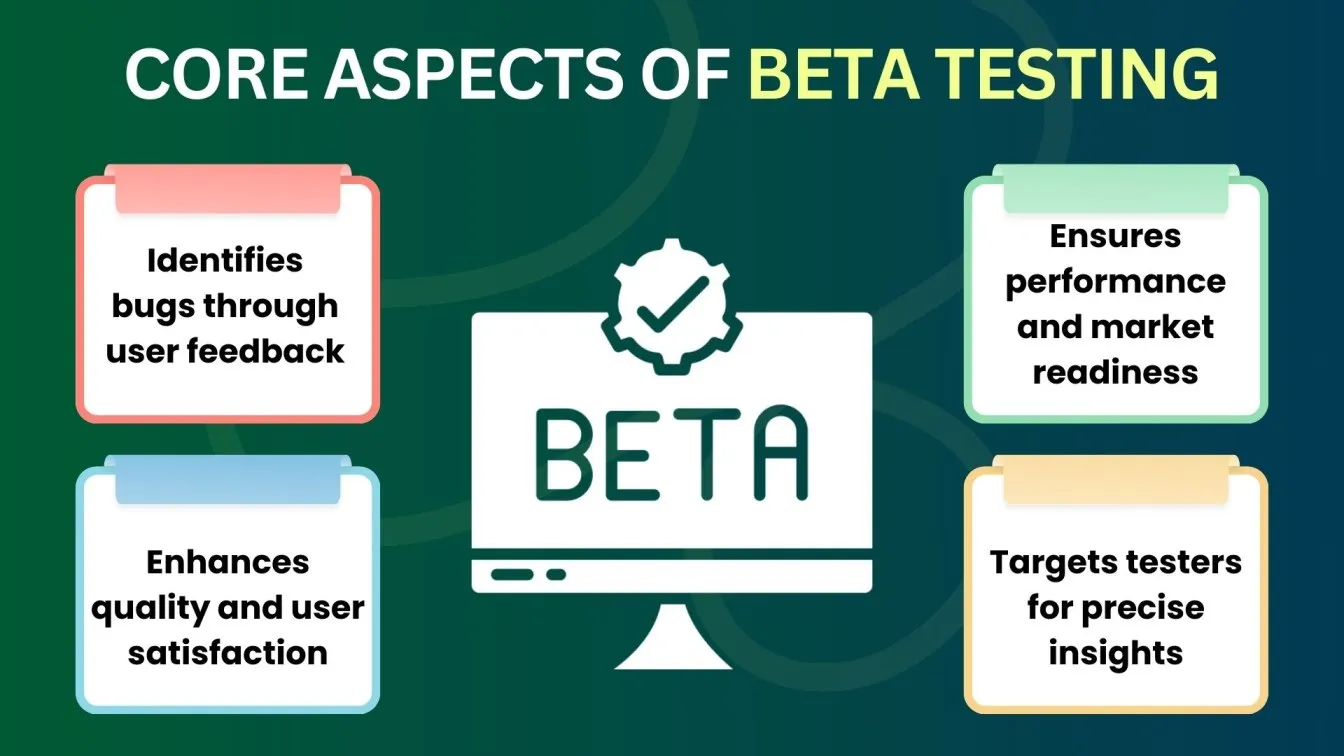

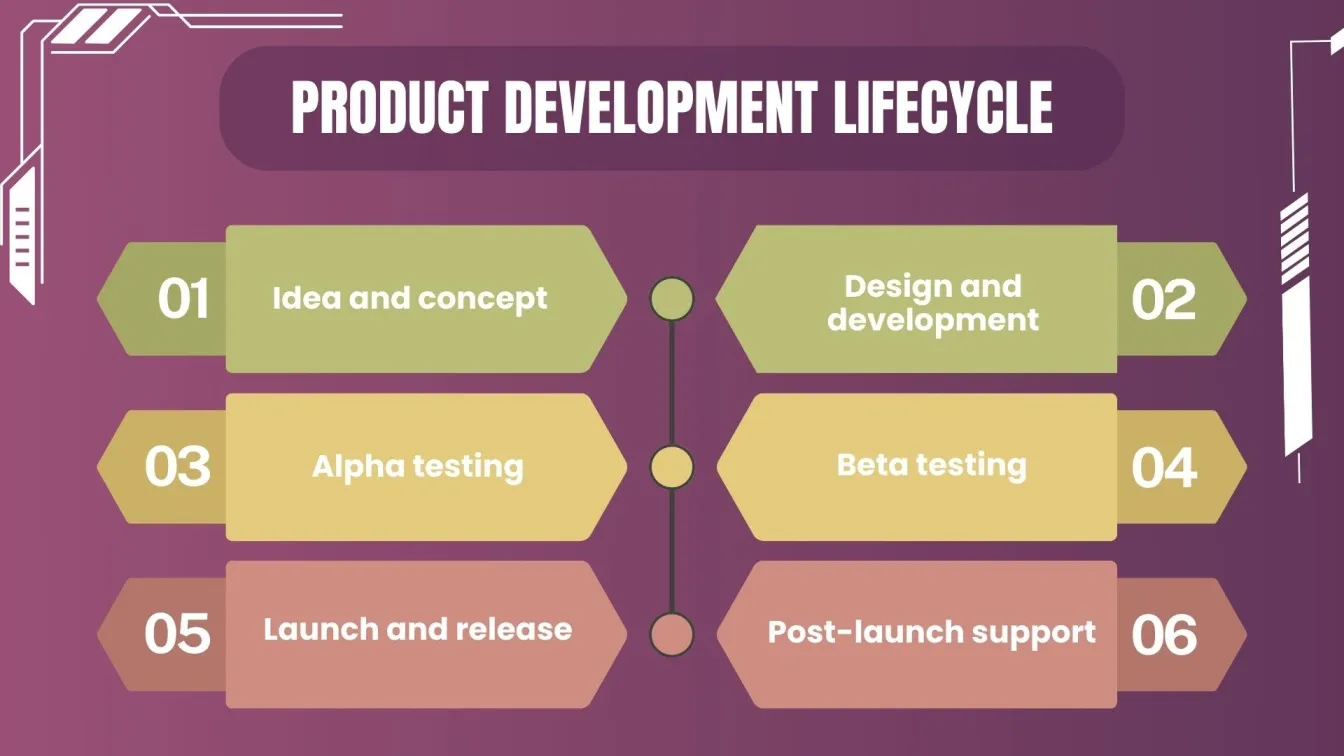

At its essence, closed beta testing is a phase in the product lifecycle where a limited audience is invited to use a product that’s nearly complete but still undergoing refinement. Unlike open beta — which casts a wide net and lets anyone participate — closed beta involves careful curation of participants. This might include existing customers, industry professionals, early adopters, or even internal staff outside of QA teams.

The aim is dual: 1) uncover bugs that internal teams missed and 2) gather feedback that feels authentic and detailed. Those goals sound practical on paper, and yet there are nuanced debates around how representative a beta group can be when scaling out to millions of real users.

II. The Promise vs. the Reality of Beta Feedback

Closed beta is widely promoted as a “mini real world” — a testing ground where user behaviours can forecast broader adoption patterns. Product teams often lean on this phase to predict future usage, refine UX flows, and stress‑test performance under diverse conditions.

But there are significant caveats:

1. The Population Isn’t Always Representative

Beta testers don’t always mirror the demographics, behaviours, or expectations of the eventual user base. In one large‑scale study that compared beta testers to standard users, researchers found similarities in aspects like hardware and operating systems. Yet significant disparities existed in geography and user backgrounds, meaning that some usability or localisation insights might never be surfaced.

This gap challenges the assumption that closed beta feedback automatically scales to “real usage.” The smaller, more controlled group simply doesn’t reflect the diversity of the larger market.

2. Feedback Biases Can Skew Results

Beta participants often differ psychologically from average users. They may be more technically inclined, more enthusiastic, or more motivated to engage deeply with the product. These traits can influence feedback — sometimes positively, sometimes in misleading ways.

For example, testers with a vested interest in improvements might focus intensely on niche features, while typical users might never interact with those features at all. Or testers might overlook common pain points because they are more forgiving or more curious than your general audience.

3. Confirmation Bias Is Real

When users know they’re part of a beta — especially a closed one — they may unconsciously confirm their preconceptions rather than explore the product naturally. They may focus on what they expect to be wrong, rather than discovering what genuinely matters in authentic usage.

This can lead product teams astray, interpreting coded signals as widespread issues when, in fact, they could be artifacts of tester mindset rather than real obstacles.

III. Why Closed Beta Still Matters (Despite Limitations)

If closed beta feedback isn’t a perfect mirror of real usage, why do teams keep doing it? The answer lies in structured insights and controlled learning.

1. Discovery of Latent Bugs and Usability Issues

Beta tests — even closed ones — expose products to real user environments, revealing bugs that never appeared in lab testing. Users might run the product on unexpected hardware combinations, unusual configurations, or network conditions that internal QA simply can’t duplicate.

This is one of closed beta’s most practical values: you’re no longer testing against artificial constraints — you’re seeing how the product behaves in the wild.

2. Feedback as a Navigation Tool

Closed beta gives teams a directional compass — not necessarily a precise map. If many testers struggle with onboarding, that flags a genuine area for improvement. If a feature consistently underperforms, it becomes easier to prioritise changes before launch.

But as multiple industry voices point out, you have to use the right instruments — analytics, structured surveys, in‑product prompts — not just freeform comments, to get something you can act on.

3. Early Brand Advocates and Community Building

Another often‑underrated benefit is community creation. Beta testers can become champions of your product — engaged participants who help shape features, advocate for the brand, and contribute to future loyalty.

This social dimension is not about mirroring “real usage” but about nurturing a cohort that grows into your early customer base.

IV. When Closed Beta Feedback Does Reflect Real Usage

Closed beta feedback can be truthful — if the conditions are right.

1. If Testers Match Target User Personas

This is the big one. A closed beta that draws testers from the actual target audience — professionals who use products like yours every day, customers who will pay for the product, or segments you specifically want to serve — will provide the most predictive signals about real usage.

This means investing time in recruitment and selection, not just throwing out invites.

2. If Metrics Are Weighted Over Opinions

Raw feedback comments are useful, but details matter. Quantitative metrics — feature usage frequency, time spent per task, abandonment rates — often reveal where real usage diverges from perception. These data points often correlate more strongly with post‑launch behaviour because they are rooted in actual interaction patterns, not subjective impressions.

3. If Post‑Launch Validation Is Built Into the Process

Beta feedback becomes much more reliable when enmeshed in a cycle of validation after launch. Comparing beta insights with early usage analytics post‑release allows teams to see what held up — and what didn’t — in real usage.

This closing loop helps refine the predictive power of future beta tests.

V. Proven Practices to Improve Predictiveness

Rather than abandoning closed beta feedback, innovative teams focus on improving its alignment with real usage. Here are some techniques that top teams use:

1. Blend Closed Beta with Targeted Analytics

Install heat‑maps, session tracking, and event logging to quantify behaviours, and pair this with qualitative feedback to provide richer context.

2. Encourage Depth, Not Volume

Rather than asking for broad opinions, ask focused tasks: “Can you complete this logical flow?” or “Try this core feature and record your time.” Specific goals reduce noise and give you actionable data.

3. Recruit Based on Use Cases

Include testers who match key use cases you expect in the real world. For instance, if your product is a design tool used by agencies, recruit professional designers — not casual hobbyists.

4. Close the Feedback Loop

Share back what you learned, how you interpreted it, and what changes you made. Closing the loop not only makes future testers more aligned but helps you validate assumptions long after the beta phase ends.

VI. When Closed Beta Misleads (And How to Avoid It)

To understand when beta feedback goes astray is to improve its accuracy.

1. When Feedback Isn’t Structured

Open‑ended commentary is valuable, but without structure, it can misrepresent priority. Surveys with consistent questions increase comparability across users.

2. When Testers Are Too Passionate or Too Technical

A tester with deep product knowledge or enormous enthusiasm may not reflect average users. Mitigation: diversify tester profiles to include novices alongside experts.

3. When There Is No Real Usage Pressure

Beta tests that artificially incentivise usage — for example, by paying testers per session — can distort how beta usage correlates with real usage after launch.

VII. Final Assessment: Can Closed Beta Feedback Truly Reflect Real Usage?

The honest answer is yes — but with conditions.

Closed beta feedback can reflect real usage patterns if carefully crafted, strategically structured, and analysed through both quantitative and qualitative lenses. Beta testing isn’t magic — it’s a forecast. Like all forecasts, its accuracy depends on the methodology, the calibration of inputs, and the context in which it’s interpreted.

The notion that closed beta feedback perfectly predicts real usage is optimistic, but recognizing it as a directional tool transforms it from a vanity metric into a robust research asset. When teams treat beta feedback as a starting point — not a final verdict — it becomes a powerful catalyst for better products and deeper customer understanding.