In the digital age of software engineering, Artificial Intelligence (AI) isn’t just a buzzword — it’s a transformative force. Developers today leverage AI in many aspects of the software lifecycle: from autocomplete code suggestions to automated test generation. One of the most controversial and consequential applications is AI-generated test reports — detailed summaries of software tests produced or assisted by AI systems. But the central question remains: do these reports genuinely help developers? The answer depends on how they are used, what problems they solve, and how teams integrate them into everyday workflows.

In this article, we’ll explore the promise, pitfalls, practical impact, and future evolution of AI-generated test reports. Throughout, we’ll balance optimism with realism — explaining where AI shines, where it stumbles, and how developers can maximize value while minimizing risk.

What Are AI-Generated Test Reports?

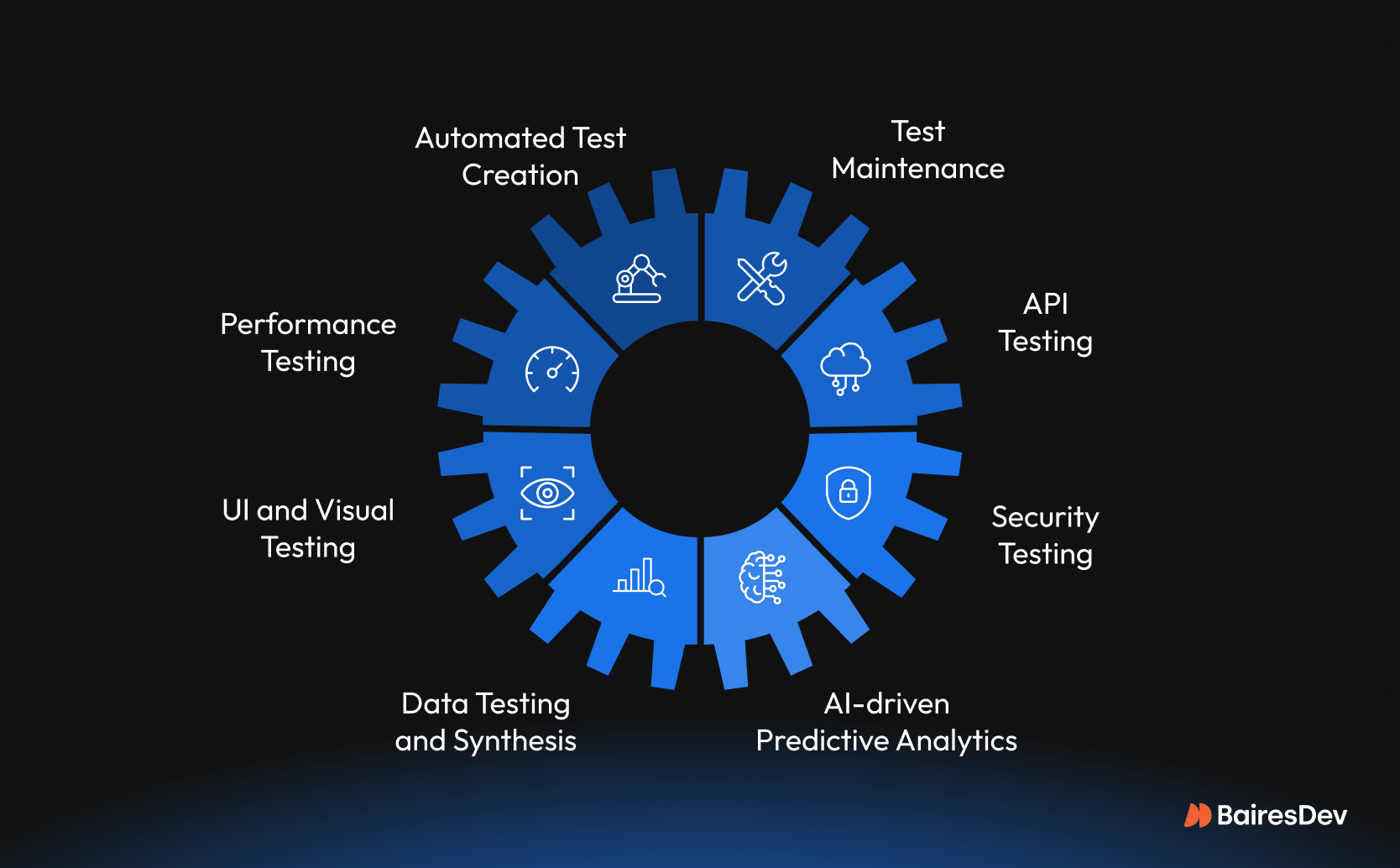

Before diving into their usefulness, it’s important to define what we mean by AI-generated test reports. In modern development pipelines, AI can:

- Generate test cases or test suites automatically from code, specifications, or user stories.

- Analyze test execution results to detect trends, anomalies, and defects.

- Produce summaries of test runs, including logs, coverage metrics, or defect predictions.

- Create human-readable, structured documentation that explains testing outcomes.

These reports are intended to give developers insights into software quality without requiring manual labor for every test cycle.

But do they actually make developers more effective?

Why AI-Generated Test Reports Are Attractive

Let’s start with positive expectations — why many teams are exploring or adopting AI test automation and reporting:

1. Speed and Scale

AI can generate thousands of test cases in minutes — especially for repetitive or boilerplate scenarios. According to industry statistics, AI-driven testing can increase test coverage by up to 30% while reducing time-to-market for releases. Many organizations also report faster testing cycles and reduced manual effort in regression suites.

This scale is crucial in agile and continuous integration/continuous delivery (CI/CD) environments, where frequent changes demand frequent testing.

2. Efficiency and Cost Savings

By automating test case creation and result summaries, teams free developers and QA engineers from repetitive tasks so they can focus on complex logic, edge cases, and creative problem-solving. Some reports suggest AI-generated testing can reduce overall testing cost by 20–30% thanks to automation.

3. Enhanced Defect Detection

AI systems can analyze patterns in test results, highlight root causes of recurring issues, and (in some models) predict potential defects before they occur. This can help developers preempt bugs and dramatically reduce post-release issues.

4. Reducing Human Error in Reporting

Generating comprehensive test summaries manually is tedious and error-prone. AI can consistently produce structured reports that include metrics, insights, and rationales — theoretically reducing human oversight. This can be especially helpful in large teams where standardized reporting improves transparency.

The Trust Paradox: When AI Helps and When It Misleads

Despite the promise, the reality is more nuanced. A major theme in developer feedback and research is the trust paradox: developers use AI tools heavily, but don’t fully trust their outputs — including tests and associated reports.

Lack of Context Awareness

AI models often lack deep semantic understanding of a codebase. Even advanced LLMs can generate test cases that miss critical edge conditions or misinterpret logic requirements. This means test reports generated by AI might not reflect real software risks. For example, one survey highlighted that ~60% of developers report missing context during AI test generation and review, which can lead to erroneous or incomplete results.

False Confidence and Hallucination

AI-generated content can appear convincing but be fundamentally incorrect — a phenomenon known as hallucination in AI research. Developers warn that tests passing in an AI report doesn’t guarantee software correctness. Misleading reports can lull teams into a false sense of security. Actual scenarios from developer communities point out that tests may simply mirror buggy implementation details without validating intended behavior.

Review Overhead

Ironically, AI-generated tests and reports can create additional overhead. Developers still need to review and validate these outputs — often more carefully than human-written tests. Some teams report that AI-generated test code increases the volume of code to review without proportionate quality improvements.

Best Practices for Using AI Test Reports Effectively

So if “trust but verify” is the modus operandi, how can teams maximize benefits and minimize risks when adopting AI-generated test reports?

1. Use AI as Assistant, Not Replacement

AI should be seen as a tool that accelerates and augments — not replaces — core developer workflows. Developers should treat AI-generated reports as draft artifacts that need human review, refinement, and contextual validation.

The real value comes when AI handles repetitive tasks, and developers ensure logical and domain correctness.

2. Integrate With CI/CD Pipelines

Integrating AI reporting into automated pipelines ensures continuous quality metrics, trend analyses, and readiness gates. When tied into version control and deployment workflows, test reports can act as proactive quality signals.

Teams should ensure that AI reporting is traceable and auditable, with clear linkages to test data and execution logs.

3. Combine Multiple Test Sources

AI-generated tests should complement — not replace — core testing types like unit tests, integration tests, smoke tests, load tests, and user acceptance tests. Diverse test sources increase resilience against incorrect assertions or blind spots in reports.

4. Educate Developers and Testers

A critical enabler for effective AI adoption is developer literacy in how AI systems work. Teams that understand model limitations, prompting techniques, test design strategies, and evaluation criteria are more effective at using AI-generated test reports.

Continual feedback loops between developers, QA engineers, and AI systems improve outcomes over time.

Beyond the Technical: The Human and Cultural Side

While the technical facets are fundamental, there’s a larger, human-centric perspective that influences whether AI-generated test reports truly help:

The Psychological Component

Developers often cite a lack of confidence in AI outputs — not because the outputs are always wrong, but because errors can be subtle and costly. This suspicion leads to deeper scrutiny or even redundant manual checks, which can diminish the perceived value of automation.

A mature AI testing culture balances trust with safeguards: structured review practices, clear ownership of test quality, and policies that define when and how AI outputs are trusted.

The Organizational Incentives

Teams must align incentives: if QA engineers or developers feel penalized for report inaccuracies, they may reject AI tools altogether. When organizations reward responsible use — including review, adaptation, and feedback into tool refinement — AI adoption becomes collaborative and less threatening.

Case Studies: When AI Reports Made a Difference

To make this more concrete, let’s look at hypothetical but realistic scenarios where AI-generated test reports helped — and where they didn’t:

1. Startup in Fast-Paced Development

A small startup with limited QA resources adopted AI test generation and reporting to accelerate daily releases. By automating test case generation for basic workflows and integrating summarized test outcomes into dashboards, they reduced manual QA time by 40% and identified regressions earlier. AI reports helped the team prioritize fixes and ship more reliably.

The caveat: none of the reports were committed to production without review, and developers used AI for exploratory scenarios rather than critical logic.

2. Large Enterprise With Reactive Culture

In contrast, a large enterprise replaced manual reporting with AI-generated test summaries but did not establish review expectations or validation checks. Over time, the AI reports showed passing metrics even as defects slipped into production — leading to loss of trust in the reporting and an eventual rollback to manual reporting.

The core lesson: automation without governance can be counterproductive.

3. Hybrid Approach With Augmented Intelligence

A mid-sized team leveraged AI outputs as first-pass reports and used analytics tools to merge AI results with historic test data. This hybrid system flagged anomalies and recommended additional manual checks. Over months, the team trimmed redundant tests and improved both quality and developer satisfaction.

This scenario illustrates synergy — not replacement.

Are AI-Generated Test Reports the Future?

The software industry is evolving rapidly. AI-based tools for testing — including generation, execution, and reporting — are becoming ubiquitous. According to market studies, a majority of organizations are exploring or adopting AI-assisted test automation.

But the future won’t be fully automated black boxes. The most effective teams will likely combine human expertise, automated workflows, and AI-assisted insights. The smartest systems won’t just generate reports — they’ll explain their reasoning, link findings back to requirements, and provide actionable next steps.

AI-generated test reports are a piece of a larger puzzle — one that includes continuous testing, developer feedback loops, and intelligent automation integrated with human judgement.

Conclusion — Yes, But With Context

So, do AI-generated test reports truly help developers?

Yes, they can — but only when used responsibly.

AI-generated test reports provide value when they:

- Reduce repetitive tasks and free up developers for meaningful work.

- Improve visibility into testing activities across the team.

- Are integrated with pipelines, reviewed by humans, and calibrated against real outcomes.

They don’t help when they:

- Generate false confidence or incomplete insights.

- Are used blindly without validation.

- Replace critical thinking and domain knowledge.

In short, AI-generated test reports are powerful tools — not magical instant solutions. Their usefulness depends on how teams integrate them, interpret their output, and balance automation with human judgement.

For developers who embrace both the promise and limitations of AI, these reports won’t just speed up workflows — they’ll help build better, more resilient software.