In the ever‑accelerating world of software delivery, organizations are constantly wrestling with the paradox of speed vs. quality. Teams want to ship more, faster, and with fewer defects — but they are also pressured to maintain confidence in every release. For decades, quality assurance (QA) teams have shouldered this burden, blending manual exploratory testing with automated scripts to catch bugs before they hit customers. Now, a new frontier beckons: multi‑agent testing powered by autonomous AI systems.

This article explores that frontier with depth and clarity, addressing the fundamental question: Can multi‑agent testing replace traditional QA teams? Spoiler: the answer isn’t a simple yes or no — but a nuanced evolution of roles, technologies, and organizational priorities.

The Rise of Multi‑Agent Testing: What It Is and Why It Matters

To appreciate the potential impact of multi‑agent testing, we must first understand what it is.

Traditional QA workflows rely on scripted test automation frameworks — tools like Selenium, Cypress, or Playwright — written and maintained by engineers to simulate user interactions and validate system behavior. These tests run in a sequence and produce pass/fail results. Traditional automation works well for repeatable checks, but it also introduces significant maintenance overhead. A small change in the user interface, for example, can break dozens of test scripts, forcing QA engineers to constantly update them and troubleshoot flaky results.

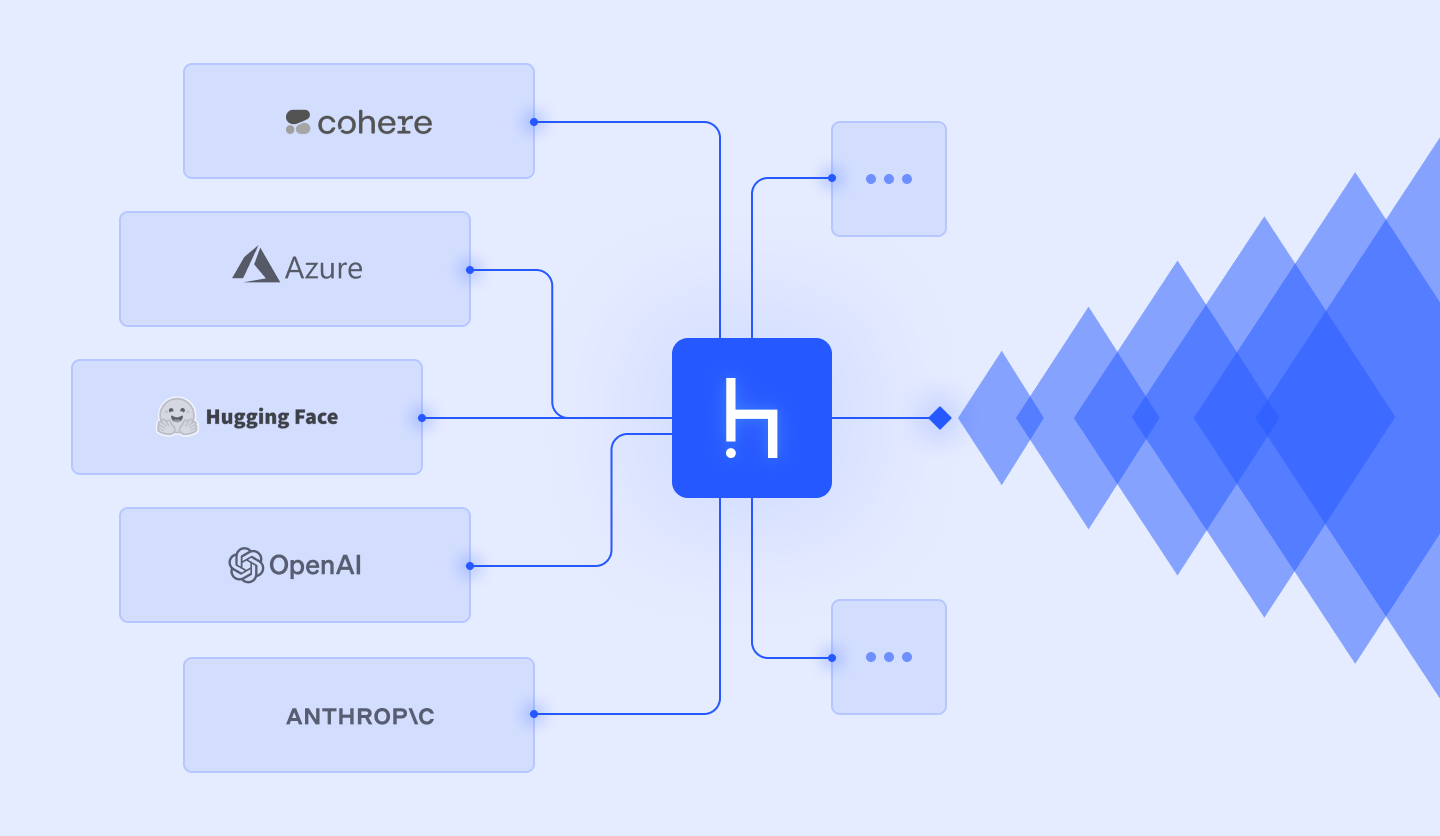

In contrast, multi‑agent testing involves a coordinated set of autonomous AI agents, each with a specialized role — generating test cases, executing tests, analyzing outcomes, or orchestrating test sequences. Rather than following brittle scripts, these agents adapt and reason about what needs to be tested based on real world changes.

Here’s how the key differences play out:

- Traditional QA: Script‑driven, linear, and static; requires extensive maintenance and expert upkeep.

- Multi‑Agent Testing: AI‑driven, collaborative, and dynamic; agents adapt, coordinate, and improve over time.

This paradigm shift is part of a broader trend toward agentic AI — systems capable of autonomous decision‑making, planning, and execution. Gartner, for instance, has identified agent‑based AI as a top strategic technology trend for 2025.

Why Traditional QA Struggles (and Why AI Testing Looks Promising)

Before we can judge whether multi‑agent testing can replace traditional QA teams, we have to understand why traditional QA often struggles.

1. Maintenance Burden and Fragile Scripts

One of the biggest complaints from QA practitioners is the constant cycle of maintenance required for automated tests. A UI tweak can cascade into dozens of script failures, leaving engineers tied up in debugging rather than improving quality. In some teams, QA engineers spend up to half of their time fixing existing automation rather than improving test coverage.

2. Limited Flexibility and Coverage

Traditional automation excels at predefined scenarios, but it struggles with the unplanned and unpredictable — edge cases, dynamic workflows, and real user behavior patterns. Scripted tests are only as good as the scenarios they encode.

3. Bottlenecks in Scaling

As applications grow in complexity, single‑agent or human‑centric approaches find it difficult to scale. Running hundreds of tests in sequence delays feedback loops and slows down development velocity.

Enter multi‑agent systems: these systems distribute work across multiple AI entities. Each agent specializes in tasks like UI testing, API coverage, performance checks, or integration coordination — all working concurrently. This can greatly accelerate testing cycles, improve coverage, and reduce bottlenecks.

Can Multi‑Agent Testing Replace Traditional QA Teams?

At first glance, the answer might seem obvious. If AI agents can test faster, adaptively, and autonomously, surely they can make traditional QA teams obsolete?

But the truth lies somewhere in the middle.

The Case for Replacement (Under Certain Conditions)

There are aspects of QA where multi‑agent systems are already outperforming traditional approaches:

1. Test Coverage and Speed

Multi‑agent testing frameworks can orchestrate hundreds of tests in parallel, adapt checkpoints, and validate complex paths without being told explicitly what to do. For example, specialized agents for change impact analysis can prioritize tests based on code changes, and execution agents can run those tests simultaneously — compressing what once took weeks into hours.

2. Reduced Maintenance

Unlike script‑based tests, intelligent agents can adapt to UI changes by reasoning about what has changed and adjusting their approach dynamically. This leads to fewer broken tests and less manual debugging.

3. Autonomous Learning and Improvement

Modern multi‑agent systems can learn from past results, continuously improving their strategies. Some research demonstrates that collaborative multi‑agent frameworks improve task success rates significantly when compared to single‑agent baselines — a crucial requirement for reliable autonomous testing at scale.

Together, these capabilities can reduce the need for large, traditional QA teams focused on repetitive tasks.

But There’s a Catch: Why Traditional QA Still Matters

Even with these advantages, multi‑agent testing isn’t a silver bullet — at least not yet.

1. Context and Human Insight

AI agents excel at pattern repetition and analytical tasks, but understanding context, business intent, and user experience nuance still requires human judgment. Traditional QA teams are experts in * exploratory testing*, a form of evaluation that is inherently creative and context‑driven — something AI struggles with.

2. Unpredictable Edge Cases and Emergent Behavior

Agents can interact and coordinate, but that very interaction sometimes produces emergent behavior that is hard to predict or test. This makes certain edge cases — especially those involving unexpected user workflows or cross‑system integration — difficult to capture with fully autonomous agents. Experts have noted that testing multi‑agent systems themselves becomes more complex, as emergent behavior defies simple pass/fail patterns.

3. Governance, Compliance, and Accountability

Traditional QA teams often carry the responsibility for compliance, GDPR checks, regulatory reporting, and interpretative verification — areas where automated agents have not yet fully demonstrated reliability on their own.

4. Transformation Takes Time

Even teams already embracing automation often still struggle with basics like complete test coverage. AI testing requires quality data, integration with CI/CD pipelines, agent governance policies, and ongoing oversight — all of which require human leadership.

A Future of Collaboration: Humans + Agents

Rather than asking whether multi‑agent testing can replace traditional QA teams, a more productive question is whether it can augment, evolve, and redefine the QA role.

Consider these shifts already underway:

QA as Strategy, Not Execution

Instead of writing test scripts, QA professionals can define quality goals for AI agents: what to test, why it matters, and how much risk is acceptable. AI handles the execution; humans interpret, refine, and improve strategy.

Continuous Collaboration

In a modern CI/CD pipeline, agents continuously validate features as they land. Humans review patterns, explore new scenarios, and mentor agents with domain knowledge — turning QA into a quality engineering partnership instead of a manual gatekeeping exercise.

Specialized Human Roles

New roles will emerge: AI QA strategists, test orchestration architects, ethical QA overseers, and quality analysts who interpret agent results and translate them into actionable insights.

In this vision, QA teams may shrink in traditional size, but they will upskill and become more impactful.

Practical Considerations for Organizations

Whether your organization is ready for multi‑agent testing depends on several practical factors:

1. Maturity of Automation

AI testing can only amplify what already exists. If your team lacks robust automation foundations, start there before layering AI agents on top.

2. Integration With DevOps

Multi‑agent systems thrive in a DevOps environment where continuous integration, delivery pipelines, and automated feedback loops are already established.

3. Skill Development

QA professionals should cultivate skills in AI oversight, prompt engineering, data analysis, and automation governance to maximize the value of agentic systems.

4. Ethical and Governance Frameworks

As autonomous systems take on more responsibility, ethical considerations — bias, fairness, accountability, and transparency — must be embedded into QA processes. Agents should be audited and monitored, not left unsupervised.

Conclusion: Replacement or Reinvention?

Can multi‑agent testing replace traditional QA teams? Not entirely — but it will redefine them.

Traditional QA professionals will still provide strategic oversight, interpret complex behaviors, and ensure that software aligns with human expectations. But repetitive, maintenance‑heavy tasks that once consumed much of their time will increasingly be handled by intelligent agents.

The true promise of multi‑agent testing is not elimination — it’s transformation:

the elevation of quality assurance from script execution to strategic quality engineering.